IIS 8.5 Boncode Tomcat Connector

This guide assumes you have already installed Tomcat Server 7/8. Tomcat offers a connector (mod_jk) but it’s very old and requires a lot of configuration. So I’ll explain how to install and configure the connector from Boncode that’s hosted on RiaForge. This specific example was for an installation of HP Service Manager running on Windows 2012 / IIS 8.5

Installation

Download the latest version from http://tomcatiis.riaforge.org

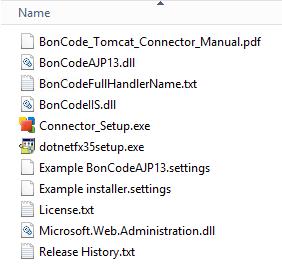

Extract the .zip-file to a temp folder (AJP13_v1026.zip)

Run “dotnetfx35setup.exe” if .NET 3.5 is not yet installed on the server.

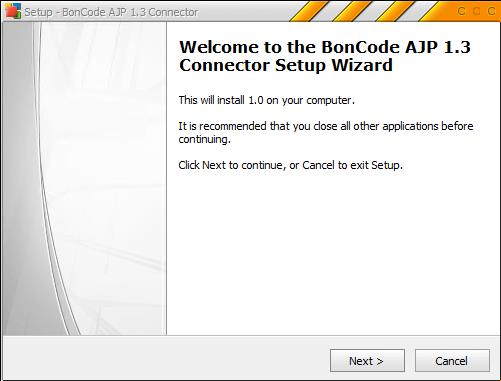

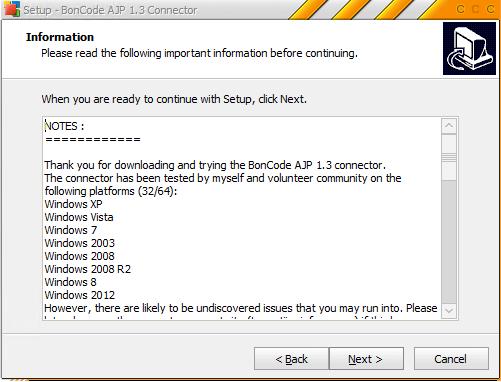

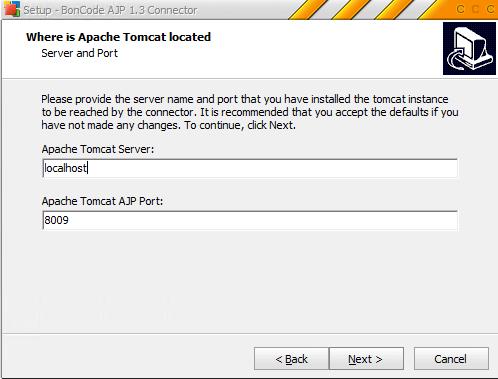

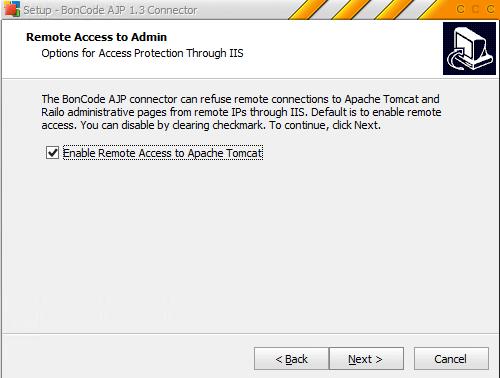

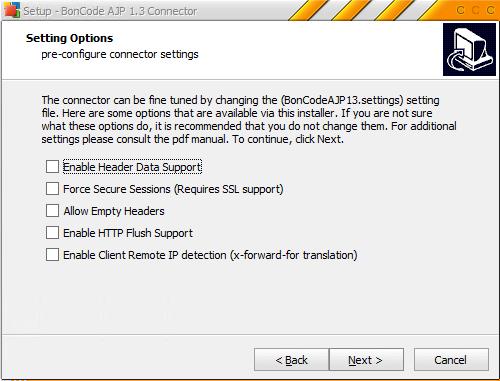

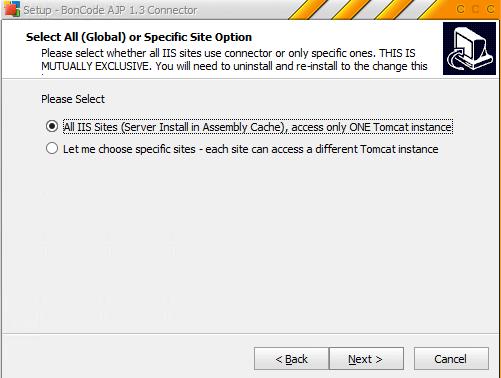

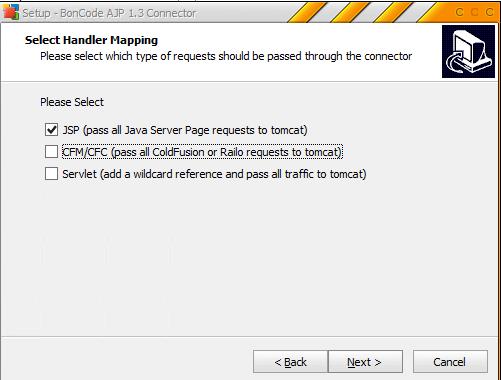

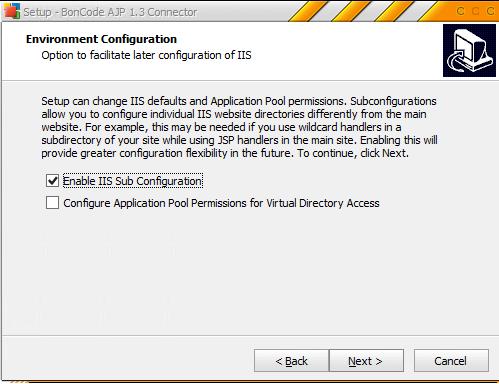

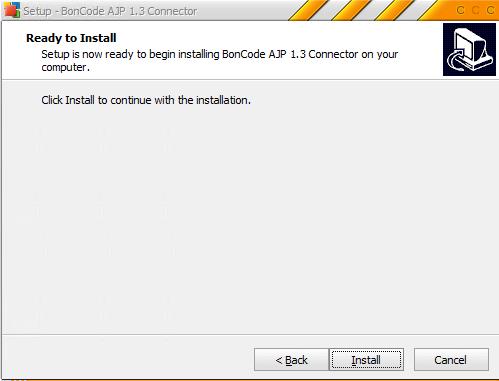

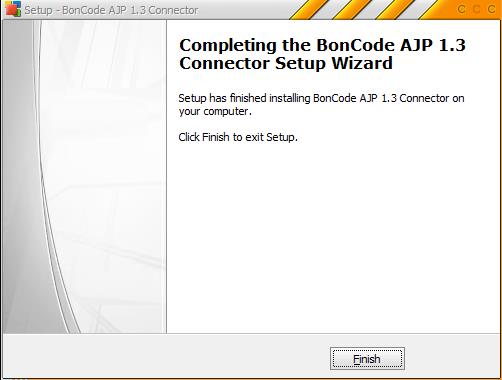

Execute “Connector_Setup.exe”

Configuration

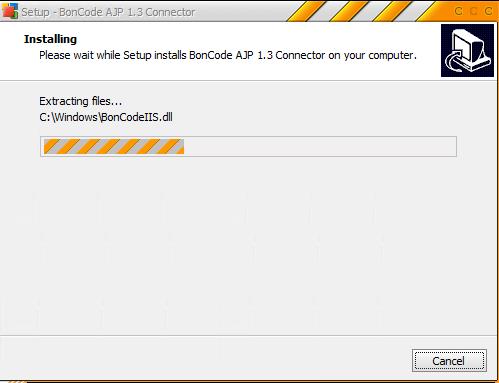

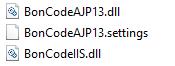

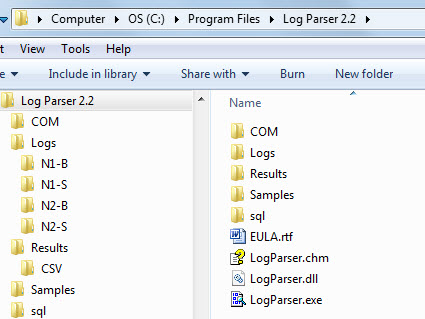

The installer has copied the binaries and configuration file to “C:\Windows”

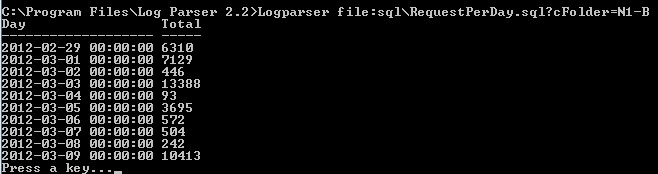

Open the file “BonCodeAJP13.settings” and modify the content as below. Feel free to change the path to LogFiles.

<Settings> <Port>8009</Port> <Server>localhost</Server> <MaxConnections>200</MaxConnections> <LogLevel>1</LogLevel> <FlushThreshold>0</FlushThreshold> <EnableRemoteAdmin>False</EnableRemoteAdmin> <LogDir>E:\LogFiles\APPLogs\BoncodeAJP</LogDir> <EnableHTTPStatusCodes>True</EnableHTTPStatusCodes> </Settings>

Make sure the IIS Application Pool user has write access on the LogDir folder!

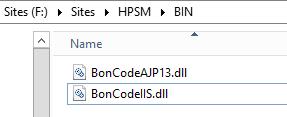

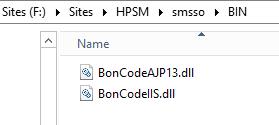

A folder named “BIN” has been created in the root of your website. It will be empty so copy these 2 files in it:

- C:\Windows\BonCodeAJP13.dll

- C:\Windows\BonCodeIIS.dll

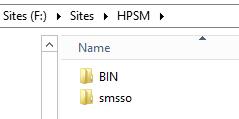

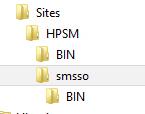

Now we need to tell IIS which folders we want to forward to Tomcat. For HPSM this will be “smsso”. Create a new folder in the root of the website named “smsso”

Copy the “BIN” folder in “smsso”

Now add a handler mapping in IIS. Go to the subfolder named “smsso” and add a new managed handler.

Request path: *

Type: [select BonCodeIIS…. from the dropdown]

Name: BonCodeAll

Run “iisreset” to reload the settings file

Recent Comments